If you think of your DNA as a massive library holding thousands of recipe books (your genes), then a gene expression profile is a list of which books are currently open on the counter and how splattered their pages are. It’s a snapshot in time.

It’s not just about what recipes a cell has access to, but which ones it’s actually using at that very moment.

What Is a Gene Expression Profile Anyway?

This "cellular activity report" is what gives a cell its unique identity and function. It’s the reason a muscle cell is busy making contractile proteins while a neuron is firing off neurotransmitters, even though both cells contain the exact same genetic blueprint.

The profile reveals which genes are switched on (transcribed into RNA) and at what intensity. It’s a dynamic, quantitative look at the cell's internal operations.

The Foundation of Cellular Identity

The ability to generate these profiles didn't just appear overnight; it stands on the shoulders of giants. The real groundwork was laid in the 1960s, starting with the landmark identification of messenger RNA (mRNA) in 1961 as the critical messenger between DNA and the protein-making machinery.

Just five years later, in 1966, scientists finally cracked the genetic code, figuring out how RNA's nucleotide triplets (codons) translate into specific amino acids. You can dive deeper into the fascinating history of these genetic discoveries on Wikipedia. These breakthroughs gave us the rulebook for how cells operate, making today's profiling techniques possible.

A gene expression profile provides an incredibly rich dataset, telling us about a cell’s:

- Identity: What kind of cell is it and what "job" is it doing?

- Activity Level: Is it resting, proliferating, or stressed?

- Response: How is it reacting to a new drug, a change in nutrients, or a disease state?

By measuring the amount of each specific mRNA molecule, we get a detailed portrait of a cell's dynamic internal world. It’s not just about what genes a cell has, but what genes it uses.

Why Gene Expression Profiling Matters in the Lab

For any lab working with mammalian cell cultures, this information is gold. In academic research, comparing gene activity between healthy and diseased cells is the bread and butter of discovering the molecular drivers behind an illness. It can point directly to new drug targets or clarify how an existing therapeutic actually works.

In bioproduction and for contract development and manufacturing organizations (CDMOs), gene expression profiling is a non-negotiable tool for quality control and process optimization.

If your CHO cell line suddenly isn't producing a therapeutic protein at the expected titer, its expression profile can tell you why. It might reveal that a metabolic pathway is out of whack or a cellular stress response has been triggered. This data lets you troubleshoot production issues with precision, lock in batch-to-batch consistency, and build more robust biomanufacturing processes from the ground up.

Choosing Your Toolkit For Gene Expression Analysis

Selecting the right tool for gene expression profiling is like choosing the right lens for a camera—you have to match the technology to the research question. A magnifying glass, a wide-angle lens, and a high-resolution satellite map each tell a different story.

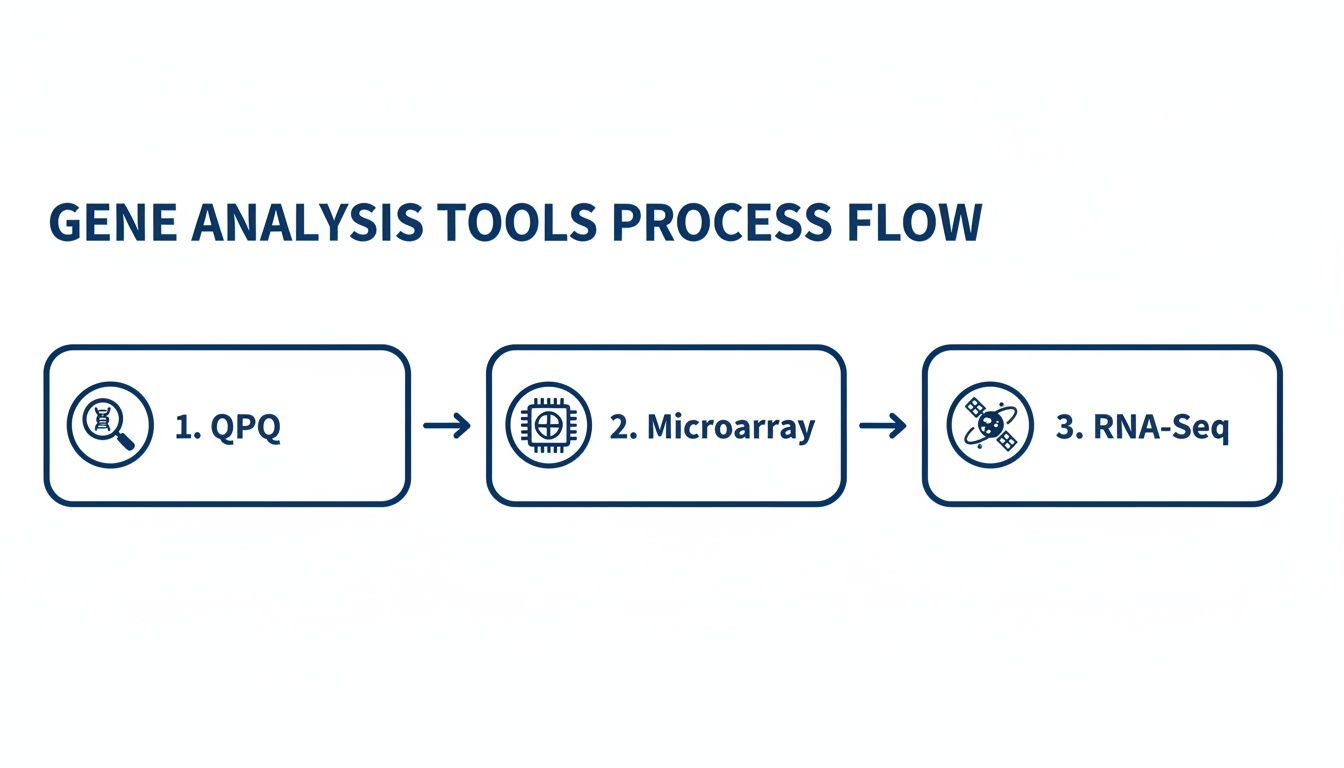

The three workhorses of gene expression analysis—qPCR, microarrays, and RNA-Seq—are no different. Understanding their specific strengths and limitations is the first step in designing a powerful and cost-effective experiment. Each one offers a distinct balance of scope, sensitivity, and cost.

qPCR: The Precision Magnifying Glass

Quantitative Polymerase Chain Reaction, or qPCR, is the scientist’s magnifying glass. It’s the go-to method when you need to measure the expression of a small, specific set of genes with outstanding precision and sensitivity.

Imagine you’ve just run a screen and have a hypothesis that a single gene is driving a cell's resistance to a new drug. qPCR lets you zoom in on that one gene and get a highly accurate count of its activity. It’s fast, relatively inexpensive, and the absolute gold standard for validating findings from larger, more exploratory studies.

The major trade-off? Its focus is extremely narrow. You can only find what you already know to look for.

Microarrays: The Wide-Angle Lens

If qPCR is a magnifying glass, a microarray is a wide-angle camera lens. This technology lets you measure the expression levels of thousands of predefined genes at the same time, giving you a broad overview of what’s happening in the cell.

A microarray chip contains thousands of microscopic spots, and each spot holds a known DNA probe for a specific gene. When you apply your sample, the amount of RNA that sticks to each spot reveals that gene’s expression level. It’s a solid approach for getting a semi-quantitative snapshot of major changes across established biological pathways.

But it’s a closed system. Its biggest drawback is that you can only detect genes that are already included on the array, meaning you’ll miss any novel or unexpected gene activity.

RNA-Seq: The Unbiased Satellite Map

Next-generation sequencing of RNA, or RNA-Seq, is the high-resolution satellite map of the transcriptome. Unlike qPCR and microarrays, RNA-Seq is an unbiased, discovery-driven method that sequences nearly every RNA molecule in your sample.

This gives you a comprehensive and quantitative view of the entire gene expression landscape.

RNA-Seq not only quantifies known genes with high accuracy but can also discover entirely new genes, alternative splicing events, and other RNA variants that other methods would miss. This makes it an incredibly powerful tool for exploratory research.

The trade-off for this incredible depth of information is cost and data complexity. RNA-Seq generates massive datasets that demand significant computational power and bioinformatics expertise to analyze properly. While older methods struggled with "gene dropout," modern RNA-Seq has largely overcome the issue of missing low-abundance transcripts, provided the sequencing depth is adequate.

To help you decide which tool fits your project, here’s a head-to-head comparison of how these technologies stack up.

Comparison of Gene Expression Profiling Technologies

This table breaks down the key features of each method, helping you align the technology with your specific research goals and budget.

| Feature | qPCR | Microarray | RNA-Sequencing (RNA-Seq) |

|---|---|---|---|

| Primary Use | Validating specific gene targets | Surveying thousands of known genes | Comprehensive transcriptome discovery and quantification |

| Scope | Targeted (1-100s of genes) | Broad but predefined (1000s of genes) | Unbiased (Whole transcriptome) |

| Sensitivity | Very high for target genes | Moderate; struggles with low-abundance transcripts | High; can be adjusted by sequencing depth |

| Discovery Potential | None; purely for quantification of known targets | Low; limited to genes on the array | Very high; detects novel genes and isoforms |

| Cost Per Sample | Low | Moderate | High |

| Data Analysis | Simple and straightforward | Moderately complex | Highly complex; requires bioinformatics expertise |

Ultimately, the best choice depends on what you need to achieve. For validating a few key biomarkers, qPCR is perfect. For a broad survey of known pathways on a budget, microarrays can still offer a cost-effective solution.

But for a deep, unbiased exploration of the complete cellular response, RNA-Seq is the undisputed modern standard.

Your Lab Workflow From Cell Culture To Sequencing Library

Generating a gene expression profile that you can trust starts long before you see a single data point. The entire process, from a healthy plate of cells to a sequencing-ready library, is a chain of events where meticulous lab work is your only insurance against bad data.

Think of it this way: even the most powerful sequencer in the world can't fix a poorly prepared sample. It’s the classic “garbage in, garbage out” principle, applied to the wet lab. The whole workflow is built around one goal: preserving the incredibly fragile RNA molecules that represent that specific snapshot in your cells' life.

This is the hands-on part of the process, turning living cells into stable genetic material that a machine can actually read. Every step, from how you harvest your cells to the final library prep, is a control point that will make or break the quality of your final data.

Sample Collection And RNA Extraction

This is where most experiments fail before they even begin. RNA is notoriously unstable and starts degrading the second you disrupt a cell's protective environment. If you’re slow, or your collection times are inconsistent, you’re introducing massive variability that will drown out the real biological signals you’re trying to find.

The only way to succeed is to work quickly and consistently. Flash-freeze your cell pellets in liquid nitrogen the moment you harvest them, or immediately treat them with a dedicated RNA stabilization reagent. This instantly locks the cellular machinery in place, preserving the mRNA transcripts you care about.

Once your samples are stabilized, the next challenge is RNA extraction. The goal is simple but tricky: get pure, intact RNA without any contaminating DNA, proteins, or other cellular junk. The quality of your extraction dictates the success of everything that follows. Using high-purity reagents, like those from PurMa Biologics, is non-negotiable if you want to avoid introducing nucleases that will chew up your precious RNA.

Quality Control The Moment Of Truth

After extraction comes quality control (QC). This isn't an optional "nice-to-have" step; it's your last chance to make sure your sample is even worth sequencing before you blow a significant chunk of your budget.

Two key numbers tell you everything you need to know about your RNA's health:

- Purity: A spectrophotometer (like a NanoDrop) measures this. It tells you if your sample is contaminated with proteins or chemicals left over from the extraction. You’re looking for an A260/A280 ratio around 2.0 and an A260/A230 ratio between 2.0–2.2.

- Integrity: An automated electrophoresis system (like a Bioanalyzer or TapeStation) assesses this, giving you an RNA Integrity Number (RIN). The RIN score goes from 1 (completely degraded) to 10 (perfectly intact). For any RNA-Seq experiment, you should demand a RIN of 7 or higher.

A low RIN score is a dealbreaker. It means your RNA is fragmented. Pushing forward with a low-RIN sample will give you a skewed profile that over-represents the 3' ends of genes and completely misses other critical data.

Ignoring bad QC metrics is like pouring a concrete foundation on sand. It’s just not going to work. It is always, always better to go back and prep a new sample than to push forward with low-quality material.

Library Preparation Turning RNA Into Data

The last step in the lab is library preparation. This is where your purified RNA is converted into a much more stable molecule called complementary DNA (cDNA). This happens through a series of enzymatic reactions that also attach "adapters"—short, known DNA sequences—to the ends of each cDNA fragment.

These adapters are critical. They act like barcodes that tell the sequencer how to identify and read each individual molecule. They also make multiplexing possible, which is where you can pool dozens of samples together and sequence them all in one go, saving a massive amount of time and money.

The infographic here shows how different analysis methods relate to each other, moving from a narrow, targeted look to a wide, comprehensive map of the transcriptome.

The success of this entire workflow stands on the shoulders of massive genomics projects. Launched in 1990, the Human Genome Project gave us the reference map we still use today, culminating in the first draft published in 2001. Later projects, like sequencing the yeast, chicken, and chimpanzee genomes, expanded this toolkit, allowing us to build the sophisticated cellular models we rely on for disease research. You can find more on these milestones in genomic research on genome.gov.

Turning Raw Sequencing Data Into Actionable Insights

When the sequencer finishes, you get a massive, almost chaotic file filled with millions of short genetic reads. This is where the real work—the bioinformatics—begins. It’s the process of taking that digital noise and turning it into a coherent biological story.

This isn’t some mysterious black box. It’s a logical series of steps designed to clean, organize, and interpret the data, ultimately revealing your gene expression profile.

Think of it like assembling a giant jigsaw puzzle without the picture on the box. You first have to sort the pieces, find the edges, and then start building out the image. The first steps in data analysis are exactly like that: all about quality control and getting everything organized.

The journey starts with the raw sequence files, usually in a format called FASTQ. These files contain both the nucleotide sequences and a quality score for every single base. The first job is to clean them up.

From Raw Reads to Aligned Counts

The very first bioinformatics step is a critical quality check. Specialized software scans the raw data to trim away any low-quality bases, which often hang out at the ends of reads, and snip off any leftover adapter sequences from the library prep stage.

This ensures only high-quality data moves forward. It’s an essential step to prevent technical artifacts from muddying your final results.

Once the reads are clean, they need a map. This is called alignment, where each of those millions of short reads is mapped back to its original location on a reference genome. Imagine having a million tiny sentence fragments and a complete reference book—alignment is the process of figuring out where each fragment belongs.

This process generates a raw count for every gene, telling you how many reads mapped to it. This count becomes the raw measure of that gene's expression level, but it’s not quite ready for comparisons yet.

Normalization: Leveling the Playing Field

Before you can confidently compare your control group to your treated group, you have to perform a crucial step called normalization. Imagine you're comparing the reading habits of two people, but one of them read from a library with ten times as many books as the other. You can't just compare the number of books read; you have to adjust for the different library sizes.

Normalization does exactly that for your sequencing data. It mathematically adjusts the raw gene counts to account for technical variations, most importantly the differences in sequencing depth between your samples.

Without proper normalization, you might mistakenly conclude a gene is upregulated in one sample when, in reality, that sample was just sequenced more deeply. This step ensures that any differences you observe are due to real biology, not technical noise.

Once your data is properly normalized, you can get to the exciting part: finding out which genes actually changed.

Finding the Story in Your Data

With a clean, normalized dataset in hand, you can finally start asking the biological questions that drove the experiment. The analysis shifts from data processing to statistical testing and pattern recognition, helping you build a compelling narrative from the numbers.

The typical downstream analysis involves a few key steps:

Differential Expression Analysis: This is the heart of most gene expression experiments. Statistical tests are run to pinpoint which genes show a significant change—either up or down—between your experimental groups. The output is a list of genes with their corresponding fold change and a p-value, which tells you how statistically significant that change is.

Clustering and Visualization: How do the samples relate to each other as a whole? Techniques like Principal Component Analysis (PCA) and hierarchical clustering group samples based on the similarity of their overall expression profiles. These visualizations, often shown as heatmaps or PCA plots, give you a bird's-eye view of your data, helping you spot patterns, outliers, and unwanted batch effects.

Pathway and Enrichment Analysis: A long list of differentially expressed genes is a great start, but it doesn't tell a complete story. Pathway analysis puts this list into a biological context. It determines if your changed genes are over-represented in specific, known biological pathways, such as metabolic processes, signaling cascades, or immune responses. This critical step helps you move from "which genes changed?" to "what biological processes were affected?"

This multi-step approach is how you transform a massive spreadsheet of numbers into a clear, data-driven narrative about your cells' response—giving you the actionable insights your research depends on.

How To Interpret Your Results And Avoid Common Pitfalls

Getting that list of differentially expressed genes can feel like you’ve crossed the finish line. In reality, you’ve just made it to the starting blocks. The real art of creating a useful gene expression profile is turning that spreadsheet of gene names and numbers into a coherent biological story.

This is where the cold, hard science of statistics meets the nuanced art of interpretation. It’s a critical step, and one where it’s easy to get lost. Chasing low p-values without seeing the bigger picture can send you down a rabbit hole, wasting time and resources on dead-end leads.

Balancing The Statistical And The Biological

Your analysis boils down to two key numbers for every gene: the p-value and the fold change. A low p-value (usually <0.05) tells you that a change is statistically significant—in other words, it's unlikely to be random noise. But statistical significance is not the same thing as biological importance.

For example, a gene might have a tiny p-value but only shift its expression by a factor of 1.1. Is that really a meaningful biological event? Probably not. On the other hand, a gene that doubles its expression (a 2-fold change) but has a p-value of 0.06 might be incredibly relevant, even if it just misses that arbitrary statistical cutoff.

A truly compelling gene expression profile hinges on finding genes that have both strong statistical backing (a low p-value) and a meaningful magnitude of change (a substantial fold change).

This two-filter approach is your best bet for focusing on the genes most likely to be driving the biology you’re trying to understand.

The Non-Negotiable Role of Replicates

One of the single biggest mistakes in gene expression studies is cutting corners on biological replicates. Trying to draw conclusions from just one or two samples per condition is a recipe for disaster. Biological variability is a fundamental truth; cells are not perfect, identical machines.

Without at least three biological replicates for each group, you simply don’t have the statistical power to tell real biological changes apart from random cellular noise. Replicates are what make the statistics work, giving you confidence that your findings are real and not just a one-off fluke.

It also helps to appreciate the deep history behind these expression patterns. Gene regulation evolves over immense timescales. Some fascinating studies show that the median times for significant gene expression changes during cellular evolution can stretch from 405 to 966 million years. This context—knowing that some genes are ancient and highly conserved while others are more dynamic—helps you make sense of the changes you see in your cultures. You can dive deeper into these evolutionary insights into gene regulation to appreciate these complex dynamics.

Common Pitfalls And How To Sidestep Them

Beyond the stats, a few common technical traps can completely sabotage your interpretation. Knowing what they are is the first step to avoiding them.

- Batch Effects: This is a classic lab blunder. If you process your control samples on Monday and your treated samples on Friday, you've just introduced a massive batch effect. Any differences you find could be from the prep day, not your experiment. Always process all your samples in a single batch or, if you can't, use statistical methods to correct for it.

- Forgetting Validation: RNA-Seq results are powerful, but they should always be treated as a well-supported hypothesis, not a final answer. The gold standard is to validate your most interesting genes with a different, targeted method like qPCR. That independent confirmation is what turns a good story into a bulletproof, publishable one.

- Ignoring Data Visualization: Don’t just live in the spreadsheet. Tools like heatmaps and Principal Component Analysis (PCA) plots are your best friends. A PCA plot will tell you in seconds if your replicates are clustering together and if your experimental groups are separating as you'd expect. It’s the fastest way to spot an outlier or a major batch effect before you waste weeks analyzing flawed data.

Real World Applications In Research And Bioproduction

The true value of a gene expression profile isn't realized until it moves off a hard drive and starts solving a real-world problem. This isn't just an abstract academic exercise. It's a practical, high-resolution tool that labs and biomanufacturers use every day to make tough, data-driven decisions.

Think of it as a molecular diagnostic for your cells. It gives you an unbiased look under the hood, letting you connect a physical outcome—like disease symptoms or a drop in protein production—directly to its underlying genetic drivers.

In any research setting, this is a game-changer. When scientists want to get to the bottom of a disease, one of the first things they do is compare the gene expression of healthy cells to diseased ones. This head-to-head comparison shows them exactly which genetic circuits have gone haywire.

Accelerating Drug Discovery And Development

Profiling is also a cornerstone of modern drug discovery. After identifying a potential therapeutic target, researchers need to know if their drug candidate actually works as intended and, just as importantly, what unintended side effects it might have.

By generating a gene expression profile from cells treated with a new compound, they can:

- Confirm the Mechanism of Action: They can verify that the drug is hitting its intended pathway and creating the desired molecular effect.

- Identify Off-Target Effects: At the same time, they can screen for unwanted changes in known toxicity pathways, giving them critical safety data early in the development pipeline.

This single step can dramatically shorten the journey from a promising compound to a safe, effective therapy, saving an enormous amount of time and money.

Generating a gene expression profile provides a high-resolution map of a cell's response to treatment. It reveals not only if a drug works but how it works at the molecular level.

Optimizing Bioproduction And Quality Control

For biomanufacturing facilities and CDMOs, gene expression profiling is an indispensable tool for process optimization and quality control. Mammalian cell lines like Chinese Hamster Ovary (CHO) cells are living factories, and their productivity can be incredibly sensitive to subtle changes in their environment.

Imagine this scenario: a well-established CHO cell line that has been a reliable workhorse suddenly shows a 25% drop in antibody production. Without a clear diagnostic tool, troubleshooting is just a frustrating guessing game. Is it the media? The temperature? A genetic mutation?

By generating a gene expression profile of the underperforming cells and comparing it to a "gold standard" batch, the problem often becomes crystal clear. The data might show that a key metabolic pathway is bottlenecked, or that the cells have flipped on a stress response that's diverting energy away from making your protein. This allows the team to fix the specific problem instead of blindly testing one variable after another.

And in the booming field of cell therapy, ensuring the final product is both safe and effective is non-negotiable. Profiling confirms that therapeutic cells have the correct identity and haven't picked up any dangerous characteristics during manufacturing, providing a critical layer of quality control before they ever reach a patient.

Frequently Asked Questions About Gene Expression Profiling

Running your first gene expression study can feel like navigating a minefield of new terms and technical decisions. Here are some of the most common questions we hear, with straightforward answers to help you avoid the pitfalls that can derail an experiment.

How Many Biological Replicates Do I Really Need?

That old "rule of three" you've heard? It’s a starting point, not a law. While three biological replicates per condition might be the textbook minimum, the right number is all about the specifics of your experiment.

If you’re working with highly controlled, immortalized cell lines in vitro, you might get away with three clean replicates. But if you're dealing with primary cells, in vivo samples, or trying to detect subtle changes in gene expression, you'll need more firepower. Expect to use five or even eight replicates to get the statistical power you need to see a real signal through the biological noise.

What Is The Difference Between A Transcriptome And A Gene Expression Profile?

These two terms get used interchangeably, but they mean very different things. Think of it this way:

The transcriptome is the cell’s complete library—a catalog of every single RNA transcript it could possibly produce. It's the full potential. A gene expression profile, on the other hand, is a snapshot of which books in that library are being checked out, and how many copies, at one specific moment in time.

In short, the transcriptome is a static list of all possibilities. The gene expression profile is a dynamic measurement of what’s actually happening right now.

Can I Use My Frozen Cell Pellets For This?

Absolutely. But success is 100% dependent on how you froze them. RNA is incredibly fragile and degrades in seconds. Your cell pellets must be flash-frozen in liquid nitrogen the instant you harvest them. Slow freezing creates ice crystals that will tear your cells apart and unleash the very RNases you want to avoid.

For the best shot at high-quality data, thaw the frozen pellet directly into an RNA lysis buffer loaded with RNase inhibitors. This protects the RNA the moment the cells thaw, preserving its integrity and making sure your stored samples yield a profile you can trust.

Ensure your results are built on a foundation of quality. PurMa Biologics provides the high-purity media, reagents, and sera you need to minimize variability and generate consistent, publication-ready data. Explore our extensive catalog and services.